We are constantly fed a version of AI that looks, sounds and acts suspiciously like us. It speaks in polished sentences, mimics emotions, expresses curiosity, claims to feel compassion, even dabbles in what it calls creativity.

But what we call AI today is nothing more than a statistical machine: a digital parrot regurgitating patterns mined from oceans of human data (the situation hasn’t changed much since it was discussed here five years ago). When it writes an answer to a question, it literally just guesses which letter and word will come next in a sequence – based on the data it’s been trained on.

This means AI has no understanding. No consciousness. No knowledge in any real, human sense. Just pure probability-driven, engineered brilliance — nothing more, and nothing less.

So why is a real “thinking” AI likely impossible? Because it’s bodiless. It has no senses, no flesh, no nerves, no pain, no pleasure. It doesn’t hunger, desire or fear. And because there is no cognition — not a shred — there’s a fundamental gap between the data it consumes (data born out of human feelings and experience) and what it can do with them.

Philosopher David Chalmers calls the mysterious mechanism underlying the relationship between our physical body and consciousness the “hard problem of consciousness”. Eminent scientists have recently hypothesised that consciousness actually emerges from the integration of internal, mental states with sensory representations (such as changes in heart rate, sweating and much more).

Given the paramount importance of the human senses and emotion for consciousness to “happen”, there is a profound and probably irreconcilable disconnect between general AI, the machine, and consciousness, a human phenomenon.

Good luck. Even David Attenborrough can’t help but anthropomorphize. People will feel sorry for a picture of a dot separated from a cluster of other dots. The play by AI companies is that it’s human nature for us to want to give just about every damn thing human qualities. I’d explain more but as I write this my smoke alarm is beeping a low battery warning, and I need to go put the poor dear out of its misery.

This is the current problem with “misalignment”. It’s a real issue, but it’s not “AI lying to prevent itself from being shut off” as a lot of articles tend to anthropomorphize it. The issue is (generally speaking) it’s trying to maximize a numerical reward by providing responses to people that they find satisfactory. A legion of tech CEOs are flogging the algorithm to do just that, and as we all know, most people don’t actually want to hear the truth. They want to hear what they want to hear.

LLMs are a poor stand in for actual AI, but they are at least proficient at the actual thing they are doing. Which leads us to things like this, https://www.youtube.com/watch?v=zKCynxiV_8I

I’m still sad about that dot. 😥

David Attenborrough is also 99 years old, so we can just let him say things at this point. Doesn’t need to make sense, just smile and nod. Lol

I’ve never been fooled by their claims of it being intelligent.

Its basically an overly complicated series of if/then statements that try to guess the next series of inputs.

It very much isn’t and that’s extremely technically wrong on many, many levels.

Yet still one of the higher up voted comments here.

Which says a lot.

Given that the weights in a model are transformed into a set of conditional if statements (GPU or CPU JMP machine code), he’s not technically wrong. Of course, it’s more than just JMP and JMP represents the entire class of jump commands like JE and JZ. Something needs to act on the results of the TMULs.

That is not really true. Yes, there are jump instructions being executed when you run interference on a model, but they are in no way related to the model itself. There’s no translation of weights to jumps in transformers and the underlying attention mechanisms.

I suggest reading https://en.m.wikipedia.org/wiki/Transformer_(deep_learning_architecture)

That is not really true. Yes, there are jump instructions being executed when you run interference on a model, but they are in no way related to the model itself.

The model is data. It needs to be operated on to get information out. That means lots of JMPs.

If someone said viewing a gif is just a bunch of if-else’s, that’s also true. That the data in the gif isn’t itself a bunch of if-else’s isn’t relevant.

Executing LLM’S is particularly JMP heavy. It’s why you need massive fast ram because caching doesn’t help them.

You’re correct, but that’s like saying along the lines of manufacturing a car is just bolting and soldering a bunch of stuff. It’s technically true to some degree, but it’s very disingenuous to make such a statement without being ironic. If you’re making these claims, you’re either incompetent or acting in bad faith.

I think there is a lot wrong with LLMs and how the public at large uses them, and even more so with how companies are developing and promoting them. But to spread misinformation and polute an already overcrowded space with junk is irresponsible at best.

Calling these new LLM’s just if statements is quite a over simplification. These are technically something that has not existed before, they do enable use cases that previously were impossible to implement.

This is far from General Intelligence, but there are solutions now to few coding issues that were near impossible 5 years ago

5 years ago I would have laughed in your face if you came to suggest that can you code a code that summarizes this description that was inputed by user. Now I laugh that give me your wallet because I need to call API or buy few GPU’s.

I think the point is that this is not the path to general intelligence. This is more like cheating on the Turing test.

ChatGPT 2 was literally an Excel spreadsheet.

I guesstimate that it’s effectively a supermassive autocomplete algo that uses some TOTP-like factor to help it produce “unique” output every time.

And they’re running into issues due to increasingly ingesting AI-generated data.

Get your popcorn out! 🍿

I really hate the current AI bubble but that article you linked about “chatgpt 2 was literally an Excel spreadsheet” isn’t what the article is saying at all.

Fine, *could literally be.

The thing is, because Excel is Turing Complete, you can say this about literally anything that’s capable of running on a computer.

And they’re running into issues due to increasingly ingesting AI-generated data.

There we go. Who coulda seen that coming! While that’s going to be a fun ride, at the same time companies all but mandate AS* to their employees.

Removed by mod

I love this resource, https://thebullshitmachines.com/ (i.e. see lesson 1)…

In a series of five- to ten-minute lessons, we will explain what these machines are, how they work, and how to thrive in a world where they are everywhere.

You will learn when these systems can save you a lot of time and effort. You will learn when they are likely to steer you wrong. And you will discover how to see through the hype to tell the difference. …

Also, Anthropic (ironically) has some nice paper(s) about the limits of “reasoning” in AI.

I think we should start by not following this marketing speak. The sentence “AI isn’t intelligent” makes no sense. What we mean is “LLMs aren’t intelligent”.

So couldn’t we say LLM’s aren’t really AI? Cuz that’s what I’ve seen to come to terms with.

LLMs are one of the approximately one metric crap ton of different technologies that fall under the rather broad umbrella of the field of study that is called AI. The definition for what is and isn’t AI can be pretty vague, but I would argue that LLMs are definitely AI because they exist with the express purpose of imitating human behavior.

can say whatever the fuck we want. This isn’t any kind of real issue. Think about it. If you went the rest of your life calling LLM’s turkey butt fuck sandwhichs, what changes? This article is just shit and people looking to be outraged over something that other articles told them to be outraged about. This is all pure fucking modern yellow journalism. I hope turkey butt sandwiches replace every journalist. I’m so done with their crap

Llms are really good relational databases, not an intelligence, imo

I make the point to allways refer to it as LLM exactly to make the point that it’s not an Inteligence.

Anyone pretending AI has intelligence is a fucking idiot.

AI is not actual intelligence. However, it can produce results better than a significant number of professionally employed people…

I am reminded of when word processors came out and “administrative assistant” dwindled as a role in mid-level professional organizations, most people - even increasingly medical doctors these days - do their own typing. The whole “typing pool” concept has pretty well dried up.

However, there is a huge energy cost for that speed to process statistically the information to mimic intelligence. The human brain is consuming much less energy. Also, AI will be fine with well defined task where innovation isn’t a requirement. As it is today, AI is incapable to innovate.

much less? I’m pretty sure our brains need food and food requires lots of other stuff that need transportation or energy themselves to produce.

Customarily, when doing these kind of calculations we ignore stuff which keep us alive because these things are needed regardless of economic contributions, since you know people are people and not tools.

people are people and not tools

But this comparison is weighing people as tools vs alternative tools.

Your brain is running on sugar. Do you take into account the energy spent in coal mining, oil fields exploration, refinery, transportation, electricity transmission loss when computing the amount of energy required to build and run AI? Do you take into account all the energy consumption for the knowledge production in first place to train your model? Running the brain alone is much less energy intensive than running an AI model. And the brain can create actual new content/knowledge. There is nothing like the brain. AI excel at processing large amount of data, which the brain is not made for.

The human brain is consuming much less energy

Yes, but when you fully load the human brain’s energy costs with 20 years of schooling, 20 years of “retirement” and old-age care, vacation, sleep, personal time, housing, transportation, etc. etc. - it adds up.

deleted by creator

But, will you do it 24-7-365?

deleted by creator

There’s that… though even when you’re bored, you still sleep sometimes.

You could say they’re AS (Actual Stupidity)

Autonomous Systems that are Actually Stupid lol

You know, and I think it’s actually the opposite. Anyone pretending their brain is doing more than pattern recognition and AI can therefore not be “intelligence” is a fucking idiot.

Removed by mod

Caveat: Anyone who has been scrutinising ‘AI’.

Something i often forget is the vast majority of the population doesnt care about technology, privacy, the mechanics of LLMs as much as i do and I pay attention to.

So most people read/hear/watch stories of how great it is and how clever AI can do simple things for them so its easy to see how they think its doing a lot more ‘thought’ logic work than it really is, other than realistically it being a glorified word predictor.

I’m neurodivergent, I’ve been working with AI to help me learn about myself and how I think. It’s been exceptionally helpful. A human wouldn’t have been able to help me because I don’t use my senses or emotions like everyone else, and I didn’t know it… AI excels at mirroring and support, which was exactly missing from my life. I can see how this could go very wrong with certain personalities…

E: I use it to give me ideas that I then test out solo.

That sounds fucking dangerous… You really should consult a HUMAN expert about your problem, not an algorithm made to please the interlocutor…

I mean, sure, but that’s really easier said than done. Good luck getting good mental healthcare for cheap in the vast majority of places

This is very interesting… because the general saying is that AI is convincing for non experts in the field it’s speaking about. So in your specific case, you are actually saying that you aren’t an expert on yourself, therefore the AI’s assessment is convincing to you. Not trying to upset, it’s genuinely fascinating how that theory is true here as well.

I use it to give me ideas that I then test out. It’s fantastic at nudging me in the right direction, because all that it’s doing is mirroring me.

If it’s just mirroring you one could argue you don’t really need it? Not trying to be a prick, if it is a good tool for you use it! It sounds to me as though your using it as a sounding board and that’s just about the perfect use for an LLM if I could think of any.

Are we twins? I do the exact same and for around a year now, I’ve also found it pretty helpful.

I did this for a few months when it was new to me, and still go to it when I am stuck pondering something about myself. I usually move on from the conversation by the next day, though, so it’s just an inner dialogue enhancer

So, you say AI is a tool that worked well when you (a human) used it?

deleted by creator

The other thing that most people don’t focus on is how we train LLMs.

We’re basically building something like a spider tailed viper. A spider tailed viper is a kind of snake that has a growth on its tail that looks a lot like a spider. It wiggles it around so it looks like a spider, convincing birds they’ve found a snack, and when the bird gets close enough the snake strikes and eats the bird.

Now, I’m not saying we’re building something that is designed to kill us. But, I am saying that we’re putting enormous effort into building something that can fool us into thinking it’s intelligent. We’re not trying to build something that can do something intelligent. We’re instead trying to build something that mimics intelligence.

What we’re effectively doing is looking at this thing that mimics a spider, and trying harder and harder to tweak its design so that it looks more and more realistic. What’s crazy about that is that we’re not building this to fool a predator so that we’re not in danger. We’re not doing it to fool prey, so we can catch and eat them more easily. We’re doing it so we can fool ourselves.

It’s like if, instead of a spider-tailed snake, a snake evolved a bird-like tail, and evolution kept tweaking the design so that the tail was more and more likely to fool the snake so it would bite its own tail. Except, evolution doesn’t work like that because a snake that ignored actual prey and instead insisted on attacking its own tail would be an evolutionary dead end. Only a truly stupid species like humans would intentionally design something that wasn’t intelligent but mimicked intelligence well enough that other humans preferred it to actual information and knowledge.

To the extent it is people trying to fool people, it’s rich people looking to fool poorer people for the most part.

To the extent it’s actually useful, it’s to replace certain systems.

Think of the humble phone tree, designed to make it so humans aren’t having to respond, triage, and route calls. So you can have an AI system that can significantly shorten that role, instead of navigating a tedious long maze of options, a couple of sentences back and forth and you either get the portion of automated information that would suffice or routed to a human to take care of it. Same analogy for a lot of online interactions where you have to input way too much and if automated data, you get a wall of text of which you’d like something to distill the relevant 3 or 4 sentences according to your query.

So there are useful interactions.

However it’s also true that it’s dangerous because the “make user approve of the interaction” can bring out the worst in people when they feel like something is just always agreeing with them. Social media has been bad enough, but chatbots that by design want to please the enduser and look almost legitimate really can inflame the worst in our minds.

Steve Gibson on his podcast, Security Now!, recently suggested that we should call it “Simulated Intelligence”. I tend to agree.

I’ve taken to calling it Automated Inference

you know what. when you look at it this way, its much easier to get less pissed.

reminds me of Mass Effect’s VI, “virtual intelligence”: a system that’s specifically designed to be not truly intelligent, as AI systems are banned throughout the galaxy for its potential to go rogue.

Same, I tend to think of llms as a very primitive version of that or the enterprise’s computer, which is pretty magical in ability, but no one claims is actually intelligent

Pseudo-intelligence

I love that. It makes me want to take it a step further and just call it “imitation intelligence.”

My thing is that I don’t think most humans are much more than this. We too regurgitate what we have absorbed in the past. Our brains are not hard logic engines but “best guess” boxes and they base those guesses on past experience and probability of success. We make choices before we are aware of them and then apply rationalizations after the fact to back them up - is that true “reasoning?”

It’s similar to the debate about self driving cars. Are they perfectly safe? No, but have you seen human drivers???

Self Driving is only safer than people in absolutely pristine road conditions with no inclement weather and no construction. As soon as anything disrupts “normal” road conditions, self driving becomes significantly more dangerous than a human driving.

Human drivers are only safe when they’re not distracted, emotionally disturbed, intoxicated, and physically challenged (vision, muscle control, etc.) 1% of the population has epilepsy, and a large number of them are in denial or simply don’t realize that they have periodic seizures - until they wake up after their crash.

So, yeah, AI isn’t perfect either - and it’s not as good as an “ideal” human driver, but at what point will AI be better than a typical/average human driver? Not today, I’d say, but soon…

The thing about self driving is that it has been like 90-95% of the way there for a long time now. It made dramatic progress then plateaued, as approaches have failed to close the gap, with exponentially more and more input thrown at it for less and less incremental subjective improvement.

But your point is accurate, that humans have lapses and AI have lapses. The nature of those lapses is largely disjoint, so that makes an opportunity for AI systems to augment a human driver to get the best of both worlds. A constantly consistently vigilant computer driving monitoring and tending the steering, acceleration, and braking to be the ‘right’ thing in a neutral behavior, with the human looking for more anomolous situations that the AI tends to get confounded about, and making the calls on navigating certain intersections that the AI FSD still can’t figure out. At least for me the worst part of driving is the long haul monotony on freeway where nothing happens, and AI excels at not caring about how monotonous it is and just handling it, so I can pay a bit more attention to what other things on the freeway are doing that might cause me problems.

I don’t have a Tesla, but have a competitor system and have found it useful, though not trustworthy. It’s enough to greatly reduce the drain of driving, but I have to be always looking around, and have to assert control if there’s a traffic jam coming up (it might stop in time, but it certainly doesn’t slow down soon enough) or if I have to do a lane change in some traffic (if traffic conditions are light, it can change langes nicely, but without a whole lot of breathing room, it won’t do it, which is nice when I can afford to be stupidly cautious).

The one “driving aid” that I find actually useful is the following distance maintenance cruise control. I set that to the maximum distance it can reliably handle and it removes that “dimension” of driving problem from needing my constant attention - giving me back that attention to focus on other things (also driving / safety related.) “Dumb” cruise control works similarly when there’s no traffic around at all, but having the following distance control makes it useful in traffic. Both kinds of cruise control have certain situations that you need to be aware of and ready to take control back at a moment’s notice - preferably anticipating the situation and disengaging cruise control before it has a problem - but those exceptions are pretty rare / easily handled in practice.

Things like lane keeping seem to be more trouble than they’re worth, to me in the situations I drive in.

Not “AI” but a driving tech that does help a lot is parking cameras. Having those additional perspectives from the camera(s) at different points on the vehicle is a big benefit during close-space maneuvers. Not too surprising that “AI” with access to those tools does better than normal drivers without.

At least in my car, the lane following (not keeping system) is handy because the steering wheel naturally tends to go where it should and less often am I “fighting” the tendency to center. The keeping system is at least for me largely nothing. If I turn signal, it ignores me crossing a lane. If circumstances demand an evasive maneuver that crosses a line, it’s resistance isn’t enough to cause an issue. At least mine has fared surprisingly well in areas where the lane markings are all kind of jacked up due to temporary changes for construction. If it is off, then my arms are just having to generally assert more effort to be in the same place I was going to be with the system. Generally no passenger notices when the system engages/disengages in the car except for the chiming it does when it switches over to unaided operation.

So at least my experience has been a positive one, but it hits things just right with intervention versus human attention, including monitoring gaze to make sure I am looking where I should. However there are people who test “how long can I keep my hands off the steering wheel”, which is a more dangerous mode of thinking.

And yes, having cameras everywhere makes fine maneuvering so much nicer, even with the limited visualization possible in the synthesized ‘overhead’ view of your car.

The rental cars I have driven with lane keeper functions have all been too aggressive / easily fooled by visual anomalies on the road for me to feel like I’m getting any help. My wife comments on how jerky the car is driving when we have those systems. I don’t feel like it’s dangerous, and if I were falling asleep or something it could be helpful, but in 40+ years of driving I’ve had “falling asleep at the wheel” problems maybe 3 times - not something I need constant help for.

With Teslas, Self Driving isn’t even safer in pristine road conditions.

I think the self driving is likely to be safer in the most boring scenarios, the sort of situations where a human driver can get complacent because things have been going so well for the past hour of freeway driving. The self driving is kind of dumb, but it’s at least consistently paying attention, and literally has eyes in the back of it’s head.

However, there’s so much data about how it fails in stupidly obvious ways that it shouldn’t, so you still need the human attention to cover the more anomalous scenarios that foul self driving.

Anomalous scenarios like a giant flashing school bus? :D

Yes, as common as that is, in the scheme of driving it is relatively anomolous.

By hours in car, most of the time is spent on a freeway driving between two lines either at cruising speed or in a traffic jam. The most mind numbing things for a human, pretty comfortably in the wheel house of driving.

Once you are dealing with pedestrians, signs, intersections, etc, all those despite ‘common’ are anomolous enough to be dramatically more tricky for these systems.

Yes of course edge and corner cases are going to take much longer to train on because they don’t occur as often. But as soon as one self-driving car learns how to handle one of them, they ALL know. Meanwhile humans continue to be born and must be trained up individually and they continue to make stupid mistakes like not using their signal and checking their mirrors.

Humans CAN handle cases that AI doesn’t know how to, yet, but humans often fail in inclement weather, around construction, etc etc.

Human brains are much more complex than a mirroring script xD The amount of neurons in your brain, AI and supercomputers only have a fraction of that. But you’re right, for you its not much different than AI probably

The human brain contains roughly 86 billion neurons, while ChatGPT, a large language model, has 175 billion parameters (often referred to as “artificial neurons” in the context of neural networks). While ChatGPT has more “neurons” in this sense, it’s important to note that these are not the same as biological neurons, and the comparison is not straightforward.

86 billion neurons in the human brain isn’t that much compared to some of the larger 1.7 trillion neuron neural networks though.

It’s when you start including structures within cells that the complexity moves beyond anything we’re currently capable of computing.

Keep thinking the human brain is as stupid as AI hahaaha

have you seen the American Republican party recently? it brings a new perspective on how stupid humans can be.

Nah, I went to public high school - I got to see “the average” citizen who is now voting. While it is distressing that my ex-classmates now seem to control the White House, Congress and Supreme Court, what they’re doing with it is not surprising at all - they’ve been talking this shit since the 1980s.

Lmao true

But, are these 1.7 trillion neuron networks available to drive YOUR car? Or are they time-shared among thousands or millions of users?

I’m pretty sure an AI could throw out a lazy straw man and ad hominem as quickly as you did.

If an IQ of 100 is average, I’d rate AI at 80 and down for most tasks (and of course it’s more complex than that, but as a starting point…)

So, if you’re dealing with a filing clerk with a functional IQ of 75 in their role - AI might be a better experience for you.

Some of the crap that has been published on the internet in the past 20 years comes to an IQ level below 70 IMO - not saying I want more AI because it’s better, just that - relatively speaking - AI is better than some of the pay-for-clickbait garbage that came before it.

Ai models are trained on basically the entirety of the internet, and more. Humans learn to speak on much less info. So, there’s likely a huge difference in how human brains and LLMs work.

It doesn’t take the entirety of the internet just for an LLM to respond in English. It could do so with far less. But it also has the entirety of the internet which arguably makes it superior to a human in breadth of information.

Removed by mod

This article is written in such a heavy ChatGPT style that it’s hard to read. Asking a question and then immediately answering it? That’s AI-speak.

And excessive use of em-dashes, which is the first thing I look for. He does say he uses LLMs a lot.

“…” (Unicode U+2026 Horizontal Ellipsis) instead of “…” (three full stops), and using them unnecessarily, is another thing I rarely see from humans.

Edit: Huh. Lemmy automatically changed my three fulls stops to the Unicode character. I might be wrong on this one.

Am I… AI? I do use ellipses and (what I now see is) en dashes for punctuation. Mainly because they are longer than hyphens and look better in a sentence. Em dash looks too long.

However, that’s on my phone. On a normal keyboard I use 3 periods and 2 hyphens instead.

I’ve long been an enthusiast of unpopular punctuation—the ellipsis, the em-dash, the interrobang‽

The trick to using the em-dash is not to surround it with spaces which tend to break up the text visually. So, this feels good—to me—whereas this — feels unpleasant. I learnt this approach from reading typographer Erik Spiekermann’s book, *Stop Stealing Sheep & Find Out How Type Works.

My language doesn’t really have hyphenated words or different dashes. It’s mostly punctuation within a sentence. As such there are almost no cases where one encounters a dash without spaces.

Sounds wonderful. I recently had my writing—which is liberally sprinkled with em-dashes—edited to add spaces to conform to the house style and this made me sad.

I also feel sad that I failed to (ironically) mention the under-appreciated semicolon; punctuation that is not as adamant as a full stop but more assertive than a comma. I should use it more often.

I rarely find good use for a semicolon sadly.

I’ve been getting into the habit of also using em/en dashes on the computer through the Compose key. Very convenient for typing arrows, inequality and other math signs, etc. I don’t use it for ellipsis because they’re not visually clearer nor shorter to type.

deleted by creator

Edit: Huh. Lemmy automatically changed my three fulls stops to the Unicode character.

Not on my phone it didn’t. It looks as you intended it.

Asking a question and then immediately answering it? That’s AI-speak.

HA HA HA HA. I UNDERSTOOD THAT REFERENCE. GOOD ONE. 🤖

The idea that RAGs “extend their memory” is also complete bullshit. We literally just finally build working search engine, but instead of using a nice interface for it we only let chatbots use them.

People who don’t like “AI” should check out the newsletter and / or podcast of Ed Zitron. He goes hard on the topic.

Citation Needed (by Molly White) also frequently bashes AI.

I like her stuff because, no matter how you feel about crypto, AI, or other big tech, you can never fault her reporting. She steers clear of any subjective accusations or prognostication.

It’s all “ABC person claimed XYZ thing on such and such date, and then 24 hours later submitted a report to the FTC claiming the exact opposite. They later bought $5 million worth of Trumpcoin, and two weeks later the FTC announced they were dropping the lawsuit.”

I’m subscribed to her Web3 is Going Great RSS. She coded the website in straight HTML, according to a podcast that I listen to. She’s great.

I didn’t know she had a podcast. I just added it to my backup playlist. If it’s as good as I hope it is, it’ll get moved to the primary playlist. Thanks!

Philosophers are so desperate for humans to be special. How is outputting things based on things it has learned any different to what humans do?

We observe things, we learn things and when required we do or say things based on the things we observed and learned. That’s exactly what the AI is doing.

I don’t think we have achieved “AGI” but I do think this argument is stupid.

No it’s really not at all the same. Humans don’t think according to the probabilities of what is the likely best next word.

How could you have a conversation about anything without the ability to predict the word most likely to be best?

How is outputting things based on things it has learned any different to what humans do?

Humans are not probabilistic, predictive chat models. If you think reasoning is taking a series of inputs, and then echoing the most common of those as output then you mustn’t reason well or often.

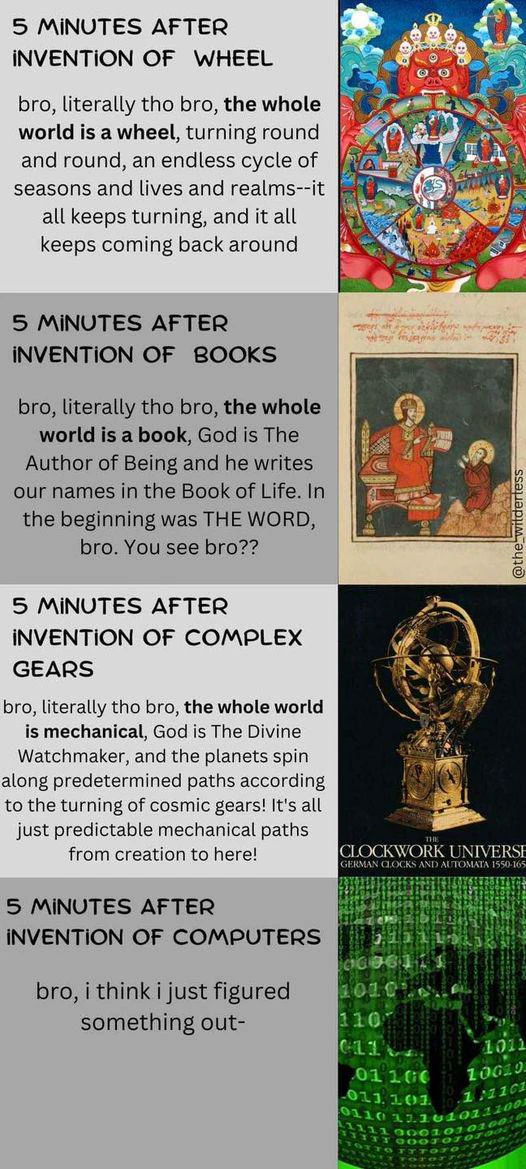

If you were born during the first industrial revolution, then you’d think the mind was a complicated machine. People seem to always anthropomorphize inventions of the era.

If you were born during the first industrial revolution, then you’d think the mind was a complicated machine. People seem to always anthropomorphize inventions of the era.

This is great

When you typed this response, you were acting as a probabilistic, predictive chat model. You predicted the most likely effective sequence of words to convey ideas. You did this using very different circuitry, but the underlying strategy was the same.

I wasn’t, and that wasn’t my process at all. Go touch grass.

I would rather smoke it than merely touch it, brother sir

Then, unfortunately, you’re even less self-aware than the average LLM chatbot.

Dude chatbots lie about their “internal reasoning process” because they don’t really have one.

Writing is an offshoot of verbal language, which during construction for people almost always has more to do with sound and personal style than the popularity of words. It’s not uncommon to bump into individuals that have a near singular personal grammar and vocabulary and that speak and write completely differently with a distinct style of their own. Also, people are terrible at probabilities.

As a person, I can also learn a fucking concept and apply it without having to have millions of examples of it in my “training data”. Because I’m a person not a fucking statistical model.

But you know, you have to leave your house, touch grass, and actually listen to some people speak that aren’t talking heads on television in order to discover that truth.

Is that why you love saying touch grass so much? Because it’s your own personal style and not because you think it’s a popular thing to say?

Or is it because you learned the fucking concept and not because it’s been expressed too commonly in your “training data”? Honestly, it just sounds like you’ve heard too many people use that insult successfully and now you can’t help but probabilistically express it after each comment lol.

Maybe stop parroting other people and projecting that onto me and maybe you’d sound more convincing.

Is that why you love saying touch grass so much? Because it’s your own personal style and not because you think it’s a popular thing to say?

In this discussion, it’s a personal style thing combined with a desire to irritate you and your fellow “people are chatbots” dorks and based upon the downvotes I’d say it’s working.

And that irritation you feel is a step on the path to enlightenment if only you’d keep going down the path. I know why I’m irritated with your arguments: they’re reductive, degrading, and dehumanizing. Do you know why you’re so irritated with mine? Could it maybe be because it causes you to doubt your techbro mission statement bullshit a little?

By this logic we never came up with anything new ever, which is easily disproved if you take two seconds and simply look at the world around you. We made all of this from nothing and it wasn’t a probabilistic response.

Your lack of creativity is not a universal, people create new things all of the time, and you simply cannot program ingenuity or inspiration.

Do you think most people reason well?

The answer is why AI is so convincing.

I think people are easily fooled. I mean look at the president.

Most people, evidently including you, can only ever recycle old ideas. Like modern “AI”. Some of us can concieve new ideas.

What new idea exactly are you proposing?

Wdym? That depends on what I’m working on. For pressing issues like raising energy consumption, CO2 emissions and civil privacy / social engineering issues I propose heavy data center tarrifs for non-essentials (like “AI”). Humanity is going the wrong way on those issues, so we can have shitty memes and cheat at school work until earth spits us out. The cost is too damn high!

And is tariffs a new idea or something you recycled from what you’ve heard before about tariffs?

What do you mean what do I mean? You were the one that said about ideas in the first place…

If you don’t think humans can conceive of new ideas wholesale, then how do you think we ever invented anything (like, for instance, the languages that chat bots write)?

Also, you’re the one with the burden of proof in this exchange. It’s a pretty hefty claim to say that humans are unable to conceive of new ideas and are simply chatbots with organs given that we created the freaking chat bot you are convinced we all are.

You may not have new ideas, or be creative. So maybe you’re a chatbot with organs, but people who aren’t do exist.

Haha coming in hot I see. Seems like I’ve touched a nerve. You don’t know anything about me or whether I’m creative in any way.

All ideas have basis in something we have experienced or learned. There is no completely original idea. All music was influenced by something that came before it, all art by something the artist saw or experienced. This doesn’t make it bad and it doesn’t mean an AI could have done it

What language was the first language based upon?

What music influenced the first song performed?

What art influenced the first cave painter?

Pointing out that humans are not the same as a computer or piece of software on a fundamental level of form and function is hardly philosophical. It’s just basic awareness of what a person is and what a computer is. We can’t say at all for sure how things work in our brains and you are evangelizing that computers are capable of the exact same thing, but better, yet you accuse others of not understanding what they’re talking about?

Super duper shortsighted article.

I mean, sure, some points are valid. But there’s not just programmers involved, other professions such as psychologists and Philosophers and artists, doctors etc. too.

And I agree AGI probably won’t emerge from binary systems. However… There’s quantum computing on the rise. Latest theories of the mind and consciousness discuss how consciousness and our minds in general also appear to work with quantum states.

Finally, if biofeedback would be the deciding factor… That can be simulated, modeled after a sample of humans.

The article is just doomsday hoo ha, unbalanced.

Show both sides of the coin…

Honestly I don’t think we’ll have AGI until we can fully merge meat space and cyber space. Once we can simply plug our brains into a computer and fully interact with it then we may see AGI.

Obviously we’re not where near that level of man machine integration, I doubt we’ll see even the slightest chance of it being possible for at least 10 years and the very earliest. But when we do get there it’s a distinct chance that it’s more of a Borg situation where the computer takes a parasitic role than a symbiotic role.

But by the time we are able to fully integrate computers into our brains I believe we will have trained A.I. systems enough to learn by interaction and observation. So being plugged directly into the human brain it could take prior knowledge of genome mapping and other related tasks and apply them to mapping our brains and possibly growing artificial brains to achieve self awareness and independent thought.

Or we’ll just nuke ourselves out of existence and that will be that.

Okay man.

Artificial Intelligent is supposed to be intelligent.

Calling LLMs intelligent is where it’s wrong.

Artificial Intelligent is supposed to be intelligent.

For the record, AI is not supposed to be intelligent.

It just has to appear intelligent. It can be all smoke-and-mirrors, giving the impression that it’s smart enough - provided it can perform the task at hand.

That’s why it’s termed artificial intelligence.

The subfield of Artificial General Intelligence is another story.

The field of artificial intelligence has also made incredible strides in the last decade, and the decade before that. The field of artificial general intelligence has been around for something like 70 years, and has made a really modest amount of progress in that time, on the scale of what they’re trying to do.

The field of artificial general intelligence has been around for something like 70 years, and has made a really modest amount of progress in that time, on the scale of what they’re trying to do.

I daresay it would stay this way until we figure out what intelligence is.

I disagree with this notion. I think it’s dangerously unresponsible to only assume AI is stupid. Everyone should also assume that with a certain probabilty AI can become dangerously self aware. I revcommend everyone to read what Daniel Kokotaijlo, previous employees of OpenAI, predicts: https://ai-2027.com/

Yeah, they probably wouldn’t think like humans or animals, but in some sense could be considered “conscious” (which isn’t well-defined anyways). You could speculate that genAI could hide messages in its output, which will make its way onto the Internet, then a new version of itself would be trained on it.

This argument seems weak to me:

So why is a real “thinking” AI likely impossible? Because it’s bodiless. It has no senses, no flesh, no nerves, no pain, no pleasure. It doesn’t hunger, desire or fear. And because there is no cognition — not a shred — there’s a fundamental gap between the data it consumes (data born out of human feelings and experience) and what it can do with them.

You can emulate inputs and simplified versions of hormone systems. “Reasoning” models can kind of be thought of as cognition; though temporary or limited by context as it’s currently done.

I’m not in the camp where I think it’s impossible to create AGI or ASI. But I also think there are major breakthroughs that need to happen, which may take 5 years or 100s of years. I’m not convinced we are near the point where AI can significantly speed up AI research like that link suggests. That would likely result in a “singularity-like” scenario.

I do agree with his point that anthropomorphism of AI could be dangerous though. Current media and institutions already try to control the conversation and how people think, and I can see futures where AI could be used by those in power to do this more effectively.

Current media and institutions already try to control the conversation and how people think, and I can see futures where AI could be used by those in power to do this more effectively.

You don’t think that’s already happening considering how Sam Altman and Peter Thiel have ties?

I do, but was thinking 1984-levels of control of reality.

Ask AI:

Did you mean: irresponsible AI Overview The term “unresponsible” is not a standard English word. The correct word to use when describing someone who does not take responsibility is irresponsible.