- cross-posted to:

- technology@lemmy.world

- cross-posted to:

- technology@lemmy.world

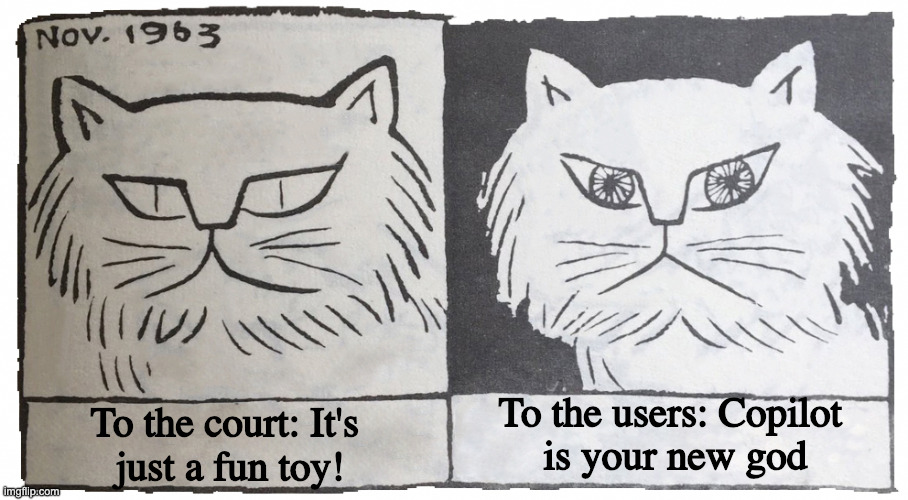

Microsoft forces CoPilot on you.

“Don’t rely on Copilot for important advice. Use Copilot at your own risk.”

My company says it’s the only one I can use. And we have to use it, cause someone said so.

My company is the same way. I laugh because 80% of my day is sending emails through a shared email box and the company specifically turned off co-pilot in the shared email boxes.

Use it… But don’t??

Yes but also no

If only there was a catagory of laws that punishes you for advertising something in deceptive ways.

One could dream.

… So, they are just straight up saying that the primary ‘feature’ they’ve been pushing hard for the last 3 years… is actually a literal, next-level clown show?

If you know anyone who works at Microsoft, please do remind them that they are an evil clown, who is in an actual cult.

Forces copilot on the unwilling, puts copilot in everything it doesn’t belong in, replaces usable features with copilot, tells everyone copilot will solve all their problems, and fills everyone with false promises about what copilot is capable of…

^Disclaimer: copilot is for entertainment purposes only and makes mistakes; don’t use it for anything critical.^

What’s the original? I like the style

I’m sorry, I don’t know where it’s originally from, I found it here: https://imgflip.com/memegenerator/406680675/Hypocrite-Cat

Thanks!

Ah yes, just for entertainment purposes only; that is exactly why we are pumping billions of dollars into it in such a way that basically the entire economy is standing on it now and destroying the world along with it. Entertainment!

All of those advertisements and reccomended prompts about various topics like health, mental health, facts, studying? All Entertainment!

Liability is going to be the death knell for broad scale reliance on any of these llms. Removing liability is the only path towards profitability

That’s the endgame, isn’t it? Not only is it going to be forced on us with promises that it will be our assistant we can delegate things to, but we will also we accountable for its calamities for “not having monitored it good enough”.

It’s like the tesla “self-driving” cars which were rushed through to production without thorough testing, then elon made all sorts of promises about how safe they were, but then they start every session with a disclaimer that says “driver must remain alert at all times; if the vehicle crashes, it’s your responsibility.”

What a fucking joke.

I work in radiology and I’ve been saying this for years. AI tools probably won’t replace us because of liability. We will have all of the liability while AI tools push us to work faster and faster for less money. I suspect this will happen with a lot of jobs.

I don’t know how it works with radiology, but my experience in software engineering is that reviewing the slop code takes more time than writing it, especially when it is crap and you have to send it back again and again and then review it.

At this point I either have to go through honestly which is extremely slow and frustrating to both sides, or accept the slop without review and then deal with tech debt later.

Both options are bad.

Well in radiology we are searching images for specific findings so the generative slop problem isn’t the issue for us, it will be being overwhelmed with false positives or false negatives with a time pressure to go faster. I’ve been trying to follow the impacts of these models on the coding professions and I do not envy you at all. It really does seem like a rock and a hard place right now.

It takes less efffort to eliminate the man whipping you than it takes to perform the work at the unreasonable rate your current whip demands. Delay work, Deny work, Defend your fellow workers. Turn the weapons of the oppression against it, UNITE, UNIONISE!

like some posts on the subs, they probably want to staff hospitals less and put all the work on the few mds they hire, to squeeze out more work for them, without hiring more radiologists,MD.

And that’s one of the few fields where it makes sense too. A system that circles potential areas of interest on medical scans is a useful thing.

The trick is end users will be held accountable for things their AI does, while corporations and governments will say “an AI did it” and wash their hands of it.

The problem with this is that the corpos will fight each other over IP/copyright/liscensing laws.

Yeah, if they can work out some kind a framework, then when blam, that is neo/technofuedalism formalized.

But the problem is that they are, in addition to ravenously insane, greedy as fuck.

Our legal system is slowly building precedent for how this kind of shit will shake out in court… but there are no broadly well understood and clear guidelines here, there’s no framework for this.

But!

They’re doing move fast and break things with trillions of dollars.

And when the spinning plates start flying apart, they will eat each other, it will be complete fucking chaos, because they moved way faster than apparently their ability to even consider or estimate what the rules for this will look like.

They did not think any of this shit through, at all.

indeed isn’t it curious how copilot and other llms, fox news, gb news and hasan piker all play the “entertainment” card to avoid accountability and responsibility for the poisonous drivel they peddle

cowards

…Hasan Piker is a coward? Lol, okay. He’s a lot of things, and not all of them are great, but he’s certainly not a coward. People who watch him are quite sure he’s going to be shot some day. Piker is aware that the alt right is trying to make him a target of violence.

How often do you publicly challenge AIPAC?

oh ho! the martyrdom fantasy, you are deep in there i see

i challenge aipac 5 times daily and i don’t describe myself as an entertainer like the con-man, grifter, misogynist, animal abuser, fraud cowardly idiot that is little bro hasan

how many times do you watch ads for hasan to pay for his nice life

tell that to fox news users

One of the reasons corporations adopted computers was that they never made mistakes and solved tasks quickly and reliably. None of that is true anymore if you add AI into your workflow.

it’s like adding an extra layer of user error. why would you want to do that? as a dev myself it’s like the bullshit middle managers/project managers and CTOs introduced a few years ago with “pair programming” or whatever it’s called. the intention was to speed up development time but in long run it just slowed you down. it was such a god damn dumb idea but was all the rave on the bullshit linkedin and tech bro blogs.

i’ve been in this industry long enough now to know the majority of tools and processes are created by people who don’t use said tools or processes.

Pair programming is kind of a weird thing to rail against. Sure, it would be terrible if it was mandated for everything, but there are many times it is a useful tool, especially for complex tasks where it is useful to talk through options as you go. Also invaluable for mentorship. I have regular sessions with juniors where we pair program on work tasks as a teaching tool.

it’s like adding an extra layer of user error.

An extra level of user error that you can’t audit.

I work as an officer manager, an import part of my job is auditing all the contacts and payments to make sure they are being done properly. Then my work also gets audited on a regular basis.

And lot of time and money is spent making sure things are completed properly with the appropriate paperwork so that at any point someone can say “why did X happen?” and find all the related paperwork. AI can’t do that, it’s a black box.

It is like pair programming isn’t it, if your partner randomly hallucinated and couldn’t explain what they’d done after the fact.

What’s the issue with pair programming? I’ve never tried it though it seems productive in theory.

Middle managers entire reason for existence seems to be to slow things down and introduce mistakes.

Doesn’t that make them in violation of truth-in-advertising laws? If they’re marketing it as a serious productivity tool, but legally it’s “for entertainment purposes only” , then their ads claim its something that it’s not.

The legal system is just for the poors. Companies and rich folks don’t have legal consequences unless they hurt other rich people

I think it’s this:

They’re not wrong.

Watching Microslop fumble so hard is pretty entertaining.

The fox news defense?

It’s a Magic Eight Ball that devours resources to vomit up ornate platitudes.

I’m just so dumbfounded that this isn’t obvious to everyone who has 1. average intelligence, 2. a five minute explanation of how it works.

You should trust it exactly as much as a magic 8 ball. Alternatively, replace all source reference of “according to << favorite packaged LLM >> …” with “according to my 10 year old nephew who is playing a game of never-say-you-don’t-know…”.

Which isn’t to say that LLMs can’t be useful. But if you trust any fact based output from such a text generator, that you can’t (or don’t) verify yourself, you seem exactly as dumb and liable as if you said “but… but… the magic 8 ball said it would be fine!”.

And if you have to do the research yourself anyway to verify what the LLM spits out, you might as well start with that, forget the AI, and save time.

This is where I land.

The vast majority of my work, if I ran it through an LLM, would make it mandatory to do more testing and verification than is needed in the first place… so there’s no goddamn point.

And it’s not even just that. There are many reasons why using AI is detrimental, even when it’s supposed to (or does) make things “easier”.

Ok, so heres how this works.

Step 1: Apparently you have never worked anywhere near ‘customer service’ in a tech related way.

Step 2: You are vastly, vastly overestimating the intelligence of the average user/person.

Sorry, most people are just fucking idiots who act far more competent, in general, at any/everything, than they actually are.

That’s it, there are no more steps.

Your baseline for ‘average person’ is actually more like top ~25 to ~10 % of people.

The average adult American reads at a 5th-6th grade level.

That is your actual average, the intelligence of an 11 year old.

The average adult American is your 10 y.o. nephew, just bigger, and more cocky.

Someone at work: OMG, I can’t believe I haven’t tried Copilot before, this is so great! Look, I asked it about how to do the thing in the framework and it came back and told me the pattern!

Me: Types the same prompt into Copilot, but replaces name of the framework with a very clearly made up word. Gets similar response telling me confidently how to do it in my made up framework.

Them: Ah, right. You did say bullshit generator, I get it now.

At least you can ask your nephew for a source. LLMs nowadays obfuscate their plagiarism quite well. Not like early ones where with any sufficiently advanced topic they started quoting the few scientific sources in their training data near directly.

That’s a whole lot of money and effort put into a thing that costs nothing to use “just for entertainment”.

They can’t do the

drug dealerfreemium rug pull yet, but that’s coming as soon as they think they have enough people hooked enough to force the conversion from free users to paid users.If it’s free, you’re the product. Then, when they make it not free anymore, you pay to be the product.

Exactly