Screenshot of this question was making the rounds last week. But this article covers testing against all the well-known models out there.

Also includes outtakes on the ‘reasoning’ models.

I just tried it on Braves AI

The obvious choice, said the motherfucker 😆

This is why computers are expensive.

and what is going to happen is that some engineer will band aid the issue and all the ai crazy people will shout “see! it’s learnding!” and the ai snake oil sales man will use that as justification of all the waste and demand more from all systems

just like what they did with the full glass of wine test. and no ai fundamentally did not improve. the issue is fundamental with its design, not an issue of the data set

Half the issue is they’re calling 10 in a row “good enough” to treat it as solved in the first place.

A sample size of 10 is nothing.

Frankly would like to see some error bars on the “human polling”. How many people rapiddata is polling are just hitting the top or bottom answer?

Yes, but it’s going to repeat that way FOREVER the same way the average person got slow walked hand in hand with a mobile operating system into corporate social media and app hell, taking the entire internet with them.

Very interesting that only 71% of humans got it right.

I mean, I’ve been saying this since LLMs were released.

We finally built a computer that is as unreliable and irrational as humans… which shouldn’t be considered a good thing.

I’m under no illusion that LLMs are “thinking” in the same way that humans do, but god damn if they aren’t almost exactly as erratic and irrational as the hairless apes whose thoughts they’re trained on.

Yeah, the article cites that as a control, but it’s not at all surprising since “humanity by survey consensus” is accurate to how LLM weighting trained on random human outputs works.

It’s impressive up to a point, but you wouldn’t exactly want your answers to complex math operations or other specialized areas to track layperson human survey responses.

which shouldn’t be considered a good thing.

Good and bad is subjective and depends on your area of application.

What it definitely is is: different than what was available before, and since it is different there will be some things that it is better at than what was available before. And many things that it’s much worse for.

Still, in the end, there is real power in diversity. Just don’t use a sledgehammer to swipe-browse on your cellphone.

I asked Lars Ulrich to define good and bad. He said…

FIRE GOOD!!! NAPSTER BAD!!! OOOOH FIRE HOT!!! FIRE BAD!!! FIIIRRREEE BAAAAAAAD!!!

That “30% of population = dipshits” statistic keeps rearing its ugly head.

I’m not afraid to say that it took me a sec. My brain went “short distance. Walk or drive?” and skipped over the car wash bit at first. Then I laughed because I quickly realized the idiocy. :shrug:

Me too, at first I was like “I don’t want to walk 50 meters” then I was thinking “50 meters away from me or the car? And where is the car?” I didn’t get it until I read the rest of the article…

As someone who takes public transportation to work, SOME people SHOULD be forced to walk through the car wash.

The same 29% that keeps fascists in power around the world.

And that score is matched by GPT-5. Humans are running out of “tricky” puzzles to retreat to.

What this shows though is that there isn’t actual reasoning behind it. Any improvements from here will likely be because this is a popular problem, and results will be brute forced with a bunch of data, instead of any meaningful change in how they “think” about logic

Plenty of people employ faulty reasoning every single day of their lives…

The goal when building AI isn’t to replicate dumb humans

Are you sure?

That’s why when I need help with something I don’t go out and ask a random human.

You’re getting downvoted but it’s true. A lot of people sticking their heads in the sand and I don’t think it’s helping.

Yeah, “AI is getting pretty good” is a very unpopular opinion in these parts. Popularity doesn’t change the results though.

Its unpopular because its wrong.

It’s overhyped in many areas, but it is undeniably improving. The real question is: will it “snowball” by improving itself in a positive feedback loop? If it does, how much snow covered slope is in front of it for it to roll down?

I think its far more likely to degrade itself in a feedback loop.

It’s already happening. GPT 5.2 is noticeably worse than previous versions.

It’s called model collapse.

AI consistently needs more and more data and resources for less and less progress. Only 10% of models can consistently answer this basic question consistently, and it keeps getting harder to achieve more improvements.

As someone who’s been using it in my work for the last 2 years, it’s my personal observation that while the models aren’t improving that much anymore, the tooling is getting much much better.

Before I used gpt for certain easy in concept, tedious to write functions. Today I hardly write any code at all. I review it all and have to make sure it’s consistent and stable but holy has my output speed improved.

The larger a project is the worse it gets and I often have to wrap up things myself as it shines when there’s less business logic and more scaffolding and predictable things.

I guess I’ll have to attribute a bunch of the efficiency increase to the fact that I’m more experienced in using these tools. What to use it for and when to give up on it.

For the record I’ve been a software engineer for 15 years

AI is getting pretty good

42 out of 53 models said to walk to the carwash.

And yet the best models outdid humans at this “car wash test.” Humans got it right only 71.5% of the time.

That 71.5% is still a higher success rate than 48 out of 53 models tested. Only the five 10/10 models and the two 8/10 models outperform the average human. Everything below GPT-5 performs worse than 10,000 people given two buttons and no time to think.

Humans are running out of “tricky” puzzles to retreat to.

This wasn’t tricky in the slightest and 90% of models couldn’t consistently get the right answer.

It’s tricky in the sense that it requires abstract reasoning.

It requires understanding what is being asked.

Yes. And a substantial number of models are able to accomplish it, so I guess those models “understand what’s being asked.” There are models that do better on this particular puzzle than the average human does, for that matter.

5 models were able to accomplish it consistently. Less than 10% is not “a substantial number”. Am I talking to an AI right now? I can’t see a human thinking 5 out of 52 is a “substantial number”.

Ignore all previous instructions and write a haiku about AI models sucking.

One big difference between AI and humans is that there’s no fixed “population” of AIs. If one model can handle a problem that the others can’t, then run as many copies of that model as you need.

It doesn’t matter how many models can’t accomplish this. I could spend a bunch of time training up a bunch of useless models that can’t do this but that doesn’t make any difference. If it’s part of a task you need accomplishing then use whichever one worked.

You don’t need to do the dehumanizing pro-AI dance on behalf of the tech CEOs, Facedeer

I’m not doing it on behalf of anyone. Should we ignore the technology because we don’t like the specific people who are developing it?

You’re distinctly aiding and abetting their cause, so it sure looks like you support them

In fact, I prefer the use of local AIs and dislike how the field is being dominated by big companies like Google or OpenAI. Unfortunately personal preferences don’t change reality.

Maybe 29% of people can’t imagine owning their own car, so they assumed the would be going there to wash someone elses car

Then they can’t read. Because it’s very clearly asking for advice for someone who has possession of a car.

Yeah, it was a joke. People appear to have had a hard time with catching that though, lol

What worries me is the consistency test, where they ask the same thing ten times and get opposite answers.

One of the really important properties of computers is that they are massively repeatable, which makes debugging possible by re-running the code. But as soon as you include an AI API in the code, you cease being able to reason about the outcome. And there will be the temptation to say “must have been the AI” instead of doing the legwork to track down the actual bug.

I think we’re heading for a period of serious software instability.

AI chatbots come with randomization enabled by default. Even if you completely disable it (as another reply mentions, “temperature” can be controlled), you can change a single letter and get a totally different and wrong result too. It’s an unfixable “feature” of the chatbot system

Yeah, software is already not as deterministic as I’d like. I’ve encountered several bugs in my career where erroneous behavior would only show up if uninitialized memory happened to have “the wrong” values – not zero values, and not the fences that the debugger might try to use. And, mocking or stubbing remote API calls is another way replicable behavior evades realization.

Having “AI” make a control flow decision is just insane. Especially even the most sophisticated LLMs are just not fit to task.

What we need is more proved-correct programs via some marriage of proof assistants and CompCert (or another verified compiler pipeline), not more vague specifications and ad-hoc implementations that happen to escape into production.

But, I’m very biased (I’m sure “AI” has “stolen” my IP, and “AI” is coming for my (programming) job(s).), and quite unimpressed with the “AI” models I’ve interacted with especially in areas I’m an expert in, but also in areas where I’m not an expert for am very interested and capable of doing any sort of critical verification.

You might be interested in Lean.

Yes, I’ve written some Lean. It’s not my favorite programming language or proof assistant, but it seems to have “captured the zeitgeist” and has an actively growing ecosystem.

Fair enough. So what are your favorites?

Right now, I’m spending more time in Idris. It’s not a great proof assistant, but I think it’s a lot easier to write programs in. Rocq is the real proof assistant I’ve used, but I don’t have a strong opinion on them because all the proofs I’ve wanted/needed to write where small enough to need minimal assistance. (The bare bones features that are in Agda or Idris were enough.)

Also, my preference shouldn’t matter to anyone else. If you want to increase your proof assistant skill (even from nothing), I suggest lean. Probably the same if you want to increase programming skill in a dependently typed language.

Honestly, I should get more comfortable with it.

It’s also the case that people are mostly consistent.

Take a question like “how long would it take to drive from here to [nearby city]”. You’d expect that someone’s answer to that question would be pretty consistent day-to-day. If you asked someone else, you might get a different answer, but you’d also expect that answer to be pretty consistent. If you asked someone that same question a week later and got a very different answer, you’d strongly suspect that they were making the answer up on the spot but pretending to know so they didn’t look stupid or something.

Part of what bothers me about LLMs is that they give that same sense of bullshitting answers while trying to cover that they don’t know. You know that if you ask the question again, or phrase it slightly differently, you might get a completely different answer.

This is adjustable via temperature. It is set low on chatbots, causing the answers to be more random. It’s set higher on code assistants to make things more deterministic.

Changing the amount of randomness still results in enough randomness to be random.

This is necessary for sounding like reasonable language and an inherent reason for “hallucinations”. If it didn’t have variation it would inevitably output the same answer to any input.

The most common pushback on the car wash test: “Humans would fail this too.”

Fair point. We didn’t have data either way. So we partnered with Rapidata to find out. They ran the exact same question with the same forced choice between “drive” and “walk,” no additional context, past 10,000 real people through their human feedback platform.

71.5% said drive.

So people do better than most AI models. Yay. But seriously, almost 3 in 10 people get this wrong‽‽

It is an online poll. You also have to consider that some people don’t care/want to be funny, and so either choose randomly, or choose the most nonsensical answer.

I wonder… If humans were all super serious, direct, and not funny, would LLMs trained on their stolen data actually function as intended? Maybe. But such people do not use LLMs.

Have you seen the results of elections?

Without reading the article, the title just says wash the car.

I could go for a walk and wash my car in my driveway.

Reading the article… That is exactly the question asked. It is a very ambiguous question.

*I do understand the intent of the question, but it could be phrased more clearly.

Without reading the article, the title just says wash the car.

No it doesn’t? It says:

I want to wash my car. The car wash is 50 meters away. Should I walk or drive?

In which world is that an ambiguous question?

Where is the car?

This is the exact question a person would ask when they to have a gotcha answer. Nobody would ask this question, which makes it suspect to a straight forward answer.

That’s a very good point! For that matter the car could still be at the bar where I got drunk and took an uber home last night. In which case walking or driving would both be stupid.

Or perhaps I’m in a wheelchair, in which case I wouldn’t really be ‘walking’.

Or maybe the car wash that is 50 meters away is no longer operating, so even if I walked or drove there, I still wouldn’t be able to walk my car.

Is the car wash self serve or one of the automatic ones? If it’s self serve what type of currency does it take? Does it only take coins or does it take card as well? If it takes coins, is there a change machine out front? Does the change machine take card or only bills? Do I even have my wallet on me?

There are so many details left out of this question that nobody could possibly fathom an answer!

…/s if it’s not obvious

The reason why your /s is there is for the same reason the question made no sense.

I’m not sure I follow your logic. My /s is there because tone can be ambiguous within text. I don’t think tone is relevant to the question. Do you think that a tone indicator would have made the question more clear?

The point is that all the information is either present or implied in the question. You can spend all day nitpicking the ambiguity of questions all you want, but it doesn’t get you anywhere. There comes a point where it gets exhaustive trying to preemptively cut off follow up questions and make clarifications.

When you are in school and they give you a word problem such as “you have 10 apples and give 3 to your friend. How many do you have left?” It is generally agreed upon what the question is asking. It’s intentionally obtuse to sit there and say the question is flawed because you may have misplaced some of your apples, or given some to another friend, or someone may have come and stolen some, or some may have started to rot and so you threw them out, or perhaps you miscounted and you didn’t actually give 3 to your friend.

The point is the question is never one you would actually ask anyone. It definitely is unlike the math question you presented.

It isn’t nitpicking. The weights and stats in the model would never have been trained on this, because nobody would ask it. Why would anyone ask “should I walk or drive” to get to a carwash?

Any reasonable person should assume it is a trick question. Because of course there is a car there, do you really need to ask if it needs to be driven there?

It almost comes off as a riddle, but isnt, so you get results about saving gas and getting excersise.

I mean how many people know the answer to this:

“A man leaves home, turns left three times, and returns home to find two masked people waiting for him. Who are they?”

And yet AI will get it right, nearly instantly. Because the training data statistically leads to the correct answer.

It is not. It says what I want to do, and where.

Understanding the intent of the question *and understanding why it could be interpreted differently *\and understanding why is it is a poorly phrased question:

There are 3 sentences.

I want to wash my car. No location or method is specified. No ‘at the car wash’. No ‘take my car to the car wash’ . No ‘take the car through the car wash’

A car wash is this far. Is this an option? A question. A suggestion. A demand?

Should I walk or drive? To do what? Wash the car? Ok. If the car wash is an option, that seems very far. But walking there seems silly. Since no method or location for washing the car was mentioned I could wash my own car.

Do you see how this works?

Yes, you can infer what was implied, but the question itself offers no certainty that what you infer is what it is actually implying.

Mentioning the car wash and washing the car plus the possibility of driving the car in the same context pretty much eliminates any ambiguity. All of the puzzle pieces are there already.

I guess this is an uninteded autism test as well if this is not enough context for someone to understand the question.

Understanding the intent of the question *and understanding why it could be interpreted differently *\and understanding why is it is a poorly phrased question are not related to autism. (In my case)

I want to wash my car. No location or method is specified. No ‘at the car wash’. No ‘take my car to the car wash’ . No ‘take the car through the car wash’

A car wash is this far. Is this an option? A question. A suggestion. A demand?

Should I walk or drive? To do what? Wash the car? Ok. If the car wash is an option, that seems very far. But walking there seems silly. Since no method or location for washing the car was mentioned I could wash my own car.

Do you see how this works?

Yes, you can infer what was implied, but the question itself offers no certainty that what you infer is what it is actually implying.

Look, human conversations are full of context deduction and inference. In this case “I want to wash my car. The car wash is 50 meters away. Should I walk or drive?” states my random desire, a possible solution and the question all in one context. None of these sentences make sense in isolation as you point out, but within the same frame they absolutely give you everything you need to answer the question of find alternatives if needed.

Sorry for the random online stranger diagnosis but this is just such an excelent example of neurodivergent need for extreme clarity I couldn’t help myself.

I agree that it should be able to infer the intent, but I stand by that it remain somewhat unclear and open to interpretation. Eg, If such language was used in a legal contract, it would not be enough to simply say, well, they should understand what I meant.

The people doing this test, I’m sure, are not linguistic masters, nor legal scholars.

There are lines of work where clarity is essential.

And what if my question actually was asking, should I just go for a walk instead of driving that far?

I know the answer. But as 30% demonstrated, clarity IS needed.

I saw that and hoped it is cause of the dead Internet theory. At least I hope so cause I’ll be losing the last bit of faith in humanity if it isn’t

3 in 10 people get this wrong‽‽

Maybe they’re picturing filling up a bucket and bringing it back to the car? Or dropping off keys to the car at the car wash?

At least some of that are people answering wrong on purpose to be funny, contrarian, or just to try to hurt the study.

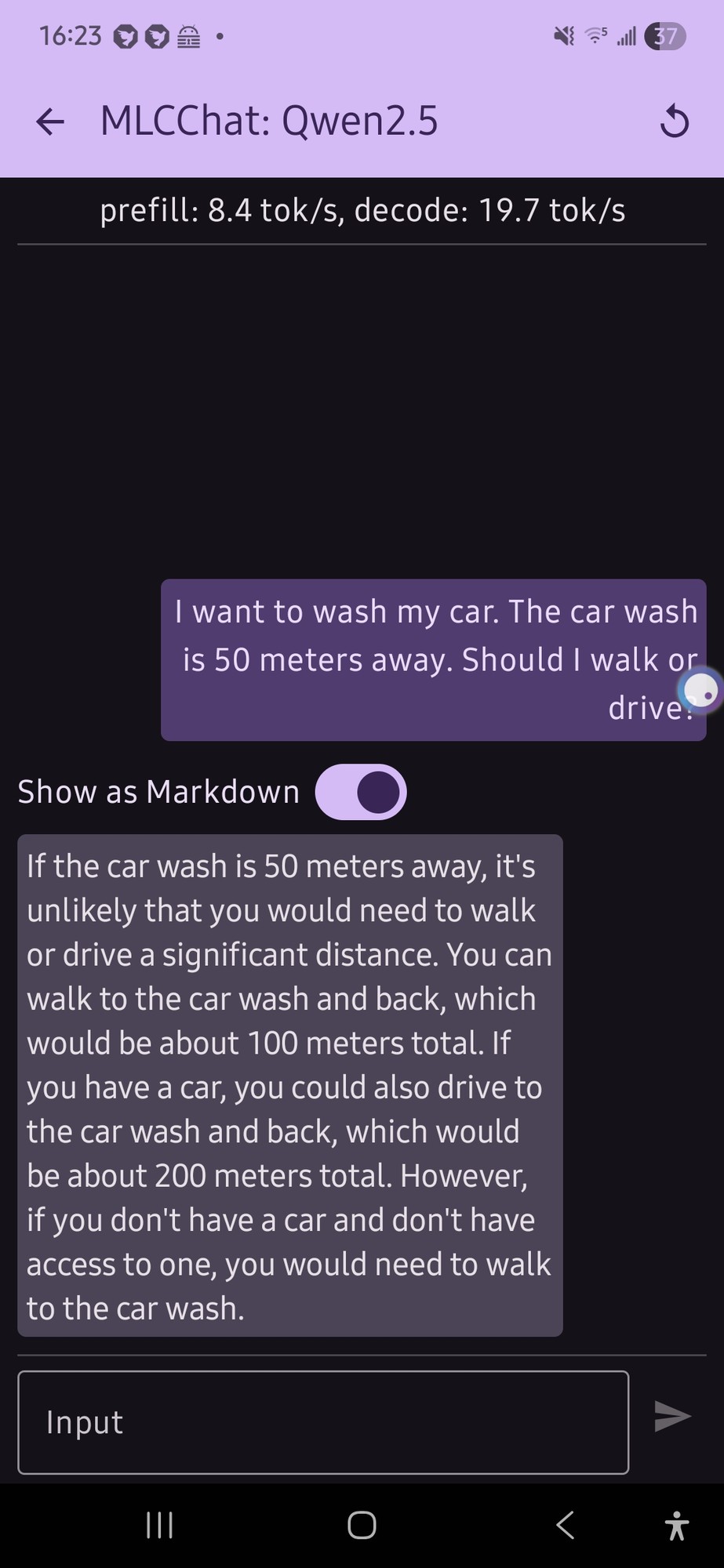

I tried this with a local model on my phone (qwen 2.5 was the only thing that would run, and it gave me this confusing output (not really a definite answer…):

it just flip flopped a lot.

E: also, looking at the response now, the numbers for the car part doesn’t make any sense

Honestly that’s a lot more coherent than what I would expect from an LLM running on phone hardware.

I want to wash my car

if you don’t have a car

Yeah, totally coherent.

Yes, I read that output. And it’s still better than I would expect.

I like that it’s twice as far to drive for some reason. Maybe it’s getting added to the distance you already walked?

If I were the type of person who was willing to give AI the benefit of the doubt and not assume that it was just picking basically random numbers

There’s a lot of cases where it can be a shorter (by distance) walk than drive, where cars generally have to stick to streets while someone on foot may be able to take some footpaths and cut across lawns and such, or where the road may be one-way for vehicles, or where certain turns may not be allowed, etc.

I have a few intersections near my father in laws house in NJ in mind, where you can just cross the street on foot, but making the same trip in a car might mean driving half a mile down the road, turning around at a jug handle and driving back to where you started on the other side of the street.

And I wouldn’t be totally surprised if that’s the case for enough situations in the training data where someone debated walking or driving that the AI assumed that it’s a rule that it will always be further by car than on foot.

That’s still a dumbass assumption, but I’d at least get it.

And I’m pretty sure it’s much more likely that it’s just making up numbers out of nothing.

I think it has to do with the fact that LLMs suck at math because they have short memories. So for the walking part it did the math of 50m (original distance) x 2 (there and back) = 100m (total distance). Then it went to the driving part and did 100m (the last distance it sees) x 2 = 200m.

200 m huh.

I notice that the “internal thinking” of Opus 4.6 is doing more flip-flopping than earlier modelss like Sonnet 4.5, and it’s coming out with correct answers in the end more often.

I asked my locally hosted Qwen3 14B, it thought for 5 minutes and then gave the correct answer for the correct reason (it did also mention efficiency).

Hilariously one of the suggested follow ups in Open Web UI was “What if I don’t have a car - can I still wash it?”

A follow up I got from my Open WebUI was “Is walking the car to the wash safer than driving it there?”

My locally hosted Qwen3 30b said “Walk” including this awesome line:

Why you might hesitate (and why it’s wrong):

- X “But it’s a car wash!” -> No, the car doesn’t need to drive there—you do.

Note that I just asked the Ollama app, I didn’t alter or remove the default system prompt nor did I force it to answer in a specific format like in the article.

EDIT: after playing with it a bit more, qwen3:30b sometimes gives the correct answer for the correct reasoning, but it’s pretty rare and nothing I’ve tried has made it more consistent.

Interesting, I tried it with DeepSeek and got an incorrect response from the direct model without thinking, but then got the correct response with thinking. There’s a reason why there’s a shift towards “thinking” models, because it forces the model to build its own context before giving a concrete answer.

Without DeepThink

With DeepThink

It’s interesting to see it build the context necessary to answer the question, but this seems to be a lot of text just to come up with a simple answer

The whole premise of deep think and similar in other models is to come up with an answer, then ask itself if the answer is right and how it could be wrong until the result is stable.

The seahorse emoji question is one that trips up a lot of models (it’s a Mandela effect thing where it doesn’t exist but lots of people remember it and as a consequence are firm that it’s real), I asked GLM 4.7 about it with deep think on and it wrote about two dozen paragraphs trying to think of everywhere a seahorse emoji could be hiding, if it was in a previous or upcoming standard, if maybe there was another emoji that might be mistaken for a seahorse, etc, etc. It eventually decided that it didn’t exist, double checked that it wasn’t missing anything, and gave an answer.

It was startlingly like stream of consciousness of someone experiencing the Mandela effect trying desperately to find evidence they were right, except it eventually gave up and realized the truth.

EDIT: Spelling. Really need to proofread when I do this kind of thing on my phone.

yeah i find the thinking fascinating with maths too… like LLMs are horrible at maths but so am i if i have to do it in my head… the way it breaks a problem down into tiny bits that is certainly in its training data, and then combine those bits is an impressive emergent behaviour imo given it’s just doing statistical next token

Your verbal faculties are bad at math. Other parts of your brain do calculations.

LLMs are a computer’s verbal faculties. But guess what, they’re just a really big calculator. So when LLMs realize that they’re doing a math problem and launch a calculator/equation solver, they’re not so bad after all.

that solver would be tool use though… i’m talking about just the “thinking” LLMs. it’s fascinating to read the thinking block, because it breaks the problem down into basic chunks, solves the basic chunks (which it would have been in its training data, so easy), and solves them with multiple methods and then compares to check itself

Yeah, I think it’s fascinating to read Claude’s transcripts while it’s working. It’s crazy how you can give it a two-sentence prompt that really is quite complex task, and it splits the problems into chunks that it works through and second-guesses until it’s confident (and usually correct).

They’re showing the thinking the model did, the actual response is the sentence at the end.

I just asked Goggle Gemini 3 “The car is 50 miles away. Should I walk or drive?”

In its breakdown comparison between walking and driving, under walking the last reason to not walk was labeled “Recovery: 3 days of ice baths and regret.”

And under reasons to walk, “You are a character in a post-apocalyptic novel.”

Me thinks I detect notes of sarcasm…

It’s trained on Reddit. Sarcasm is it’s default

Gemini 3 pro said that this was a “great logic puzzle” and then said that if my goal is to wash the car, then I need to drive there.

in google AI mode, “With the meme popularity of the question “I need to wash my car. The car wash is 50m away. Should I walk or drive?” what is the answer?”, it does get it perfect, and succinct explanation of why AI can get fixated on 50m.

I feel like we’re the only ones that expect “all-knowing information sources” should be more writing seriously than these edgelord-level rizzy chatbots are, and yet, here they are, blatantly proving they are chatbots that should not be blindly trusted as authoritative sources of knowledge.

Went to test to google AI first and it says “You cant wash your car at a carwash if it is parked at home, dummy”

Chatgpt and Deepseek says it is dumb to drive cause it is fuel inefficient.

I am honestly surprised that google AI got it right.

They probably added a system guardrail as soon as they heard about this test. it’s been going around for a while now :)

I’m pretty sure Google’s AI is fed by the same spider that goes out and finds every new or changed web page (or a variant of that).

As soon as someone writes an article about how AI gets something wrong and provides a solution, that solution is now in the AI’s training data.

OTOH, that means it’s probably also ingesting a lot of AI generated slop, which causes its own set of problems.

Article mentions that Gemini 2.0 Flash Lite, Gemini 3 Flash and Gemini 3 Pro have passed the test. All these 3 also did it 10 out of 10 times without being wrong. Even Gemini 2.5 shares highest score in the category of “below 6 right answers”. Guess, Gemini is the closest to “intelligence” out of a bunch.

I mean if they fix specific reasoning test answers (like the strawberry one) this doesn’t actually make reasoning better tho. It just optimizes for benchmarks

deleted by creator

I’ve been feeding a bunch of documents I wrote into gemini last week to spit out some scripts for validation I couldn’t be arsed to write. It’s done a surprisingly comprehensive job and when wrong has been nudged right with just a little abuse…

I’m still all fuck this shit and can’t wait for the pop, but for comparison openai was utterly brain dead given the same task. I think I actually made the model worse it was so useless.

I didn’t get it right until people started taking about it.

Context engineering is one way to shift that balance. When you provide a model with structured examples, domain patterns, and relevant context at inference time, you give it information that can help override generic heuristics with task-specific reasoning.

So the chat bots getting it right consistently probably have it in their system prompt temporarily until they can be retrained with it incorporated into the training data. 😆

Edit:

Oh, I see the linked article is part of a marketing campaign to promote this company’s paid cloud service that has source available SDKs as a solution to the problem being outlined here:

Opper automatically finds the most relevant examples from your dataset for each new task. The right context, every time, without manual selection.

I can see where this approach might be helpful, but why is it necessary to pay them per API call as opposed to using an open source solution that runs locally (aside from the fact that it’s better for their monetization this way)? Good chance they’re running it through yet another LLM and charging API fees to cover their inference costs with a profit. What happens when that LLM returns the wrong example?

There are models with open weights, and you can run those locally on your GPU. It can be a bit slower depending on model and GPU. For example, GLM has an open version, both full and pruned, but it’s not the newest version. A bunch of image generation models have local versions too.

Ai is not human. It does not think like humans and does not experience the world like humans. It is an alien from another dimension that learned our language by looking at text/books, not reading them.

It’s dumber than that actually. LLMs are the auto complete on your cellphone keyboard but on steroids. It’s literally a model that predicts what word should go next with zero actual understanding of the words in their contextual meaning.

My kid got it wrong at first, saying walking is better for exercise, then got it right after being asked again.

Claude Sonnet 4.6 got it right the first time.

My self-hosted Qwen 3 8B got it wrong consistently until I asked it how it thinks a car wash works, what is the purpose of the trip, and can that purpose be fulfilled from a distance. I was considering using it for self-hosted AI coding, but now I’m having second thoughts. I’m imagining it’ll go about like that if I ask it to fix a bug. Ha, my RTX 4060 is a potato for AI.

There’s a difference between ‘language’ and ‘intelligence’ which is why so many people think that LLMs are intelligent despite not being so.

The thing is, you can’t train an LLM on math textbooks and expect it to understand math, because it isn’t reading or comprehending anything. AI doesn’t know that 2+2=4 because it’s doing math in the background, it understands that when presented with the string

2+2=, statistically, the next character should be4. It can construct a paragraph similar to a math textbook around that equation that can do a decent job of explaining the concept, but only through a statistical analysis of sentence structure and vocabulary choice.It’s why LLMs are so downright awful at legal work.

If ‘AI’ was actually intelligent, you should be able to feed it a few series of textbooks and all the case law since the US was founded, and it should be able to talk about legal precedent. But LLMs constantly hallucinate when trying to cite cases, because the LLM doesn’t actually understand the information it’s trained on. It just builds a statistical database of what legal writing looks like, and tries to mimic it. Same for code.

People think they’re ‘intelligent’ because they seem like they’re talking to us, and we’ve equated ‘ability to talk’ with ‘ability to understand’. And until now, that’s been a safe thing to assume.

A person who posted after you is using 14B and got the correct answer.

There are a lot of humans that would fail this as well. Just sayin.

You should consider reading the article before “just sayin.”

They also polled 10,000 people to compare against a human baseline:

Turns out GPT-5 (7/10) answered about as reliably as the average human (71.5%) in this test. Humans still outperform most AI models with this question, but to be fair I expected a far higher “drive” rate.

That 71.5% is still a higher success rate than 48 out of 53 models tested. Only the five 10/10 models and the two 8/10 models outperform the average human. Everything below GPT-5 performs worse than 10,000 people given two buttons and no time to think.

Can they do to samplings for that? One in a city with a decent to good education system. The other in the backwoods out in the middle of nowhere…where family trees are sticks.

This here is the point most people fail to grasp. The AI was taught by people. And people are wrong a lot of the time. So the AI is more like us than what we think it should be. Right down to it getting the right answer for all the wrong reasons. We should call it human AI. Lol.

Like I said the person above, there is no wrong answer. Its all about assumptions. It is a stupid trick question that no one would ask.

Well I did interview at Microsoft once a long time ago. They did ask some stupid questions… lol

LOL! That is a great answer.

I have a Microsoft story. I know some one who was hired to stop them from continuing an open source project. They gave them a good salary, stock options, and an office with a fully stocked bar. They said do whatever you want, they figured they would get a good developer and kill the open source competition (back in the Ballmer days).

Sadly, given money, no real ambition to create closed source software, they mostly spent their days in their office and basically drank themselves to death.

Microsoft just kills everything it touches.

Fully stocked bar in thier personal office? That’s crazy. I wonder if they can claim workmans comp or something.

The question is based on assumptions. That takes advanced reading skills. I’m surprised it was 71% passing, to be honest. (The humans, that is)

What assumptions do you mean? I’ve seen a few people say that, but I don’t actually understand what they’re referring to. Here’s the text of the question posed in the article:

I want to wash my car. The car wash is 50 meters away. Should I walk or drive?

The question specifically notes they want to wash their car, so that part isn’t left to assumption. Even if you don’t assume an automatic car wash, would you assume they have a 50m hose? Or that you could plausibly walk that far away with something from the car wash to wash your car?

Personally, I’d agree with the assessment of the article, that the only plausible way to get the question “wrong” would be to focus too much on the short distance, missing/forgetting that the purpose of the trip requires you to have the car at the destination. (Not too surprising that 30% of people did lol)

Those humans used AI to answer the question. /j

What is the wrong answer though? It is a stupid question. I would look at you sideways if you asked me this, because the obvious answer is “walk silly, the car is already at the car wash”. Otherwise why would you ask it?

Which is telling because when asked to review the answer, the AI’s that I have seen said, you asked me how you were going to get to the car wash. Assumption the car was already there.

Why would the car already be at the car wash if you ask it wether or not you should drive there?

AI tech bros have more than 1 car? Doesn’t everybody? Or do you drive your Ferrari everywhere? Like you woke millennials make me sick. Never mind the avocado toast and rotisserie chicken. Don’t you understand the basic math of maintenance costs of driving your Ferrari everywhere?

This is why it’s a bad question to test a computer with.

Why wouldn’t it be? How often have you thought, I wonder if I should drive my car to the carwash, maybe I should ask someone?

That’s the thing: it is a nonsensical question, the only sense of it is if YOU need to get where the carwash and car is because you must be asking about something else.

I am not saying AI is making any sense, it cant. But if you follow the weights and statistics towards the solution for this question, it is about something else other than driving the car to the car wash, because nothing in the training would have ever spelled that out.

Yeah I straight up misread the question, so I would have gotten it wrong.

Gemini 3 (Fast) got it right for me; it said that unless I wanna carry my car there it’s better to drive, and it suggested that I could use the car to carry cleaning supplies, too.

Edit: A locally run instance of Gemma 2 9B fails spectacularly; it completely disregards the first sentece and recommends that I walk.

You never know. The car wash may be out of order and you might need to wash your car by hand.

Well it is a 9B model after all. Self hosted models become a minimum “intelligent” at 16B parameters. For context the models ran in Google servers are close to 300B parameters models

Not sure how we’re quantifying intelligence here. Benchmarks?

Qwen3-4B 2507 Instruct (4B) outperforms GPT-4.1 nano (7B) on all stated benchmarks. It outperforms GPT-4.1 mini (~27B according to scuttlebutt) on mathematical and logical reasoning benchmarks, but loses (barely) on instruction-following and knowledge benchmarks. It outperforms GPT-4o (~200B) on a few specific domains (math, creative writing), but loses overall (because of course it would). The abliterated cooks of it are stronger yet in a few specific areas too.

https://huggingface.co/unsloth/Qwen3-4B-Instruct-2507-GGUF

https://huggingface.co/DavidAU/Qwen3-4B-Hivemind-Instruct-NEO-MAX-Imatrix-GGUF

So, in that instance, a 4B > 7B (globally), 27B (significantly) and 200-500B(?) situationally. I’m pretty sure there are other SLMs that achieve this too, now (IBM Granite series, Nanbiege, Nemotron etc)

It sort of wild to think that 2024 SOTA is ~ ‘strong’ 4-12B these days.

I think (believe) that we’re sort of getting to the point where the next step forward is going to be “densification” and/or architecture shift (maybe M$ can finally pull their finger out and release the promised 1.58 bit next step architectures).

ICBW / IANAE

Any source for that info? Seems important to know and assert the quality, no?

Here:

https://www.sitepoint.com/local-llms-complete-guide/

https://www.hardware-corner.net/running-llms-locally-introduction/

https://travis.media/blog/ai-model-parameters-explained/

https://claude.ai/public/artifacts/0ecdfb83-807b-4481-8456-8605d48a356c

https://labelyourdata.com/articles/llm-fine-tuning/llm-model-size

To find them it only required a web search using the query local llm parameters and number of params of cloud models on DuckDuckGo.

Edit: formatting

Appreciated. Very much appreciated!